📝Dunning-Kruger effect

- tags

overestimating yourself, underestimating others

it is about competence, not IQ or intelligence

opposite is impostor syndrom

the idea is very old (quotes)

learn to say “I don’t know” (?)

ignorance is not not knowing, but “knowing” something that is not true

Solutions:

it is easy to overcome once you see how incompetent you are

ask for feedback from other people and consider it even if it is hard to hear

keep working

question what you know

challenge yourself (?)

Resources:

Unskilled and Unaware of It: How Difficulties in Recognizing One’s Own Incompetence Lead to Inflated Self-Assessments (original paper)

Why incompetent people think they’re amazing - David Dunning - YouTube

what the Dunning-Kruger effect is and isn’t

The Dunning-Kruger effect is an example of Dunning-Kruger effect

TODO Clean-up quotes and “rest” section

Quotes

Fact: ‘Dunning–Kruger effect - Wikipedia’

In the field of psychology, the Dunning–Kruger effect is a cognitive bias in which people of low ability have illusory superiority and mistakenly assess their cognitive ability as greater than it is. The cognitive bias of illusory superiority comes from the inability of low-ability people to recognize their lack of ability. Without the self-awareness of metacognition, low-ability people cannot objectively evaluate their competence or incompetence.

Fact: ‘Dunning–Kruger effect - Wikipedia’

David Dunning and Justin Kruger

Fact: ‘Dunning–Kruger effect - Wikipedia’

the cognitive bias of illusory superiority results from an internal illusion in people of low ability and from an external misperception in people of high ability; that is, “the miscalibration of the incompetent stems from an error about the self, whereas the miscalibration of the highly competent stems from an error about others.”

Fact: ‘Dunning-Kruger effect - RationalWiki’

One of the painful things about our time is that those who feel certainty are stupid, and those with any imagination and understanding are filled with doubt and indecision. —Bertrand Russell, The Triumph of Stupidity

Fact: ‘Dunning-Kruger effect - RationalWiki’

The Dunning-Kruger effect is a slightly more specific case of the bias known as illusory superiority, where people tend to overestimate their good points in comparison to others around them, while concurrently underestimating their negative points.

Fact: ‘Dunning-Kruger effect - RationalWiki’

those who found the tasks easy (and thus scored highly) mistakenly thought that they would also be easy for others.

Fact: ‘Dunning-Kruger effect - RationalWiki’

It is not in our human nature to imagine that we are wrong. —Kathryn Schulz

Fact: ‘Dunning-Kruger effect - RationalWiki’

The fool doth think he is wise, but the wise man knows himself to be a fool. —Touchstone, in As You Like It by William Shakespeare

Fact: ‘Dunning-Kruger effect - RationalWiki’

They [Dunning and Kruger] were famously inspired by McArthur Wheeler, a Pittsburgh man who attempted to rob a bank while his face was covered in lemon juice. Wheeler had learned that lemon juice could be used as “invisible ink” (that is, the old childhood experiment of making the juice appear when heated); he therefore got the idea that unheated lemon juice would render his facial features unrecognizable or “invisible.”

After he was effortlessly caught (as he made no other attempts to conceal himself during the robberies), he was presented with video surveillance footage of him robbing the banks in question, fully recognizable. At this, he expressed apparently sincere surprise and lack of understanding as to why his plan did not work — he was not competent enough to see the logical gaps in his thinking and plan.

Fact: ‘Dunning-Kruger effect - RationalWiki’

The idea that people who don’t know that they don’t know (“Dunning-Kruger effect” is so much less confusing than any “know-know” phrase) isn’t particularly new. The Bertrand Russell quote is from the mid 1930s, and even earlier, Charles Darwin, in The Descent of Man in 1871, stated “ignorance more frequently begets confidence than does knowledge.” Even back in ancient Greece, Plato’s Apology attributed to Socrates the quote at the top, which today is often summed up as, roughly, “the wisest people know that they know nothing.”

Fact: ‘We Are All Confident Idiots - Pacific Standard’

One can’t help but feel for the people who fall into Kimmel’s trap. Some appear willing to say just about anything on camera to hide their cluelessness about the subject at hand (which, of course, has the opposite effect). Others seem eager to please, not wanting to let the interviewer down by giving the most boringly appropriate response: I don’t know. But for some of these interviewees, the trap may be an even deeper one. The most confident-sounding respondents often seem to think they do have some clue—as if there is some fact, some memory, or some intuition that assures them their answer is reasonable.

Fact: ‘We Are All Confident Idiots - Pacific Standard’

In many cases, incompetence does not leave people disoriented, perplexed, or cautious. Instead, the incompetent are often blessed with an inappropriate confidence, buoyed by something that feels to them like knowledge.

Fact: ‘We Are All Confident Idiots - Pacific Standard’

The American author and aphorist William Feather once wrote that being educated means “being able to differentiate between what you know and what you don’t.”

Fact: ‘We Are All Confident Idiots - Pacific Standard’

Because it’s so easy to judge the idiocy of others, it may be sorely tempting to think this doesn’t apply to you. But the problem of unrecognized ignorance is one that visits us all. And over the years, I’ve become convinced of one key, overarching fact about the ignorant mind. One should not think of it as uninformed. Rather, one should think of it as misinformed.

Fact: ‘We Are All Confident Idiots - Pacific Standard’

An ignorant mind is precisely not a spotless, empty vessel, but one that’s filled with the clutter of irrelevant or misleading life experiences, theories, facts, intuitions, strategies, algorithms, heuristics, metaphors, and hunches that regrettably have the look and feel of useful and accurate knowledge.

Fact: ‘We Are All Confident Idiots - Pacific Standard’

Because of the way we are built, and because of the way we learn from our environment, we are all engines of misbelief. And the better we understand how our wonderful yet kludge-ridden, Rube Goldberg engine works, the better we—as individuals and as a society—can harness it to navigate toward a more objective understanding of the truth.

Fact: ‘We Are All Confident Idiots - Pacific Standard’

Some of our most stubborn misbeliefs arise not from primitive childlike intuitions or careless category errors, but from the very values and philosophies that define who we are as individuals. Each of us possesses certain foundational beliefs—narratives about the self, ideas about the social order—that essentially cannot be violated: To contradict them would call into question our very self-worth. As such, these views demand fealty from other opinions. And any information that we glean from the world is amended, distorted, diminished, or forgotten in order to make sure that these sacrosanct beliefs remain whole and unharmed.

Fact: ‘We Are All Confident Idiots - Pacific Standard’

The way we traditionally conceive of ignorance—as an absence of knowledge—leads us to think of education as its natural antidote. But education can produce illusory confidence.

Fact: ‘We Are All Confident Idiots - Pacific Standard’

One very commonly held sacrosanct belief, for example, goes something like this: I am a capable, good, and caring person. Any information that contradicts this premise is liable to meet serious mental resistance.

Fact: ‘We Are All Confident Idiots - Pacific Standard’

The anthropological theory of cultural cognition suggests that people everywhere tend to sort ideologically into cultural worldviews diverging along a couple of axes: They are either individualist (favoring autonomy, freedom, and self-reliance) or communitarian (giving more weight to benefits and costs borne by the entire community); and they are either hierarchist (favoring the distribution of social duties and resources along a fixed ranking of status) or egalitarian (dismissing the very idea of ranking people according to status). According to the theory of cultural cognition, humans process information in a way that not only reflects these organizing principles, but also reinforces them. These ideological anchor points can have a profound and wide-ranging impact on what people believe, and even on what they “know” to be true.

Fact: ‘We Are All Confident Idiots - Pacific Standard’

The way we traditionally conceive of ignorance—as an absence of knowledge—leads us to think of education as its natural antidote. But education, even when done skillfully, can produce illusory confidence.

Fact: ‘We Are All Confident Idiots - Pacific Standard’

Driver’s education courses, particularly those aimed at handling emergency maneuvers, tend to increase, rather than decrease, accident rates. They do so because training people to handle, say, snow and ice leaves them with the lasting impression that they’re permanent experts on the subject. In fact, their skills usually erode rapidly after they leave the course. And so, months or even decades later, they have confidence but little leftover competence when their wheels begin to spin.

Fact: ‘We Are All Confident Idiots - Pacific Standard’

But, of course, guarding people from their own ignorance by sheltering them from the risks of life is seldom an option. Actually getting people to part with their misbeliefs is a far trickier, far more important task. Luckily, a science is emerging, led by such scholars as Stephan Lewandowsky at the University of Bristol and Ullrich Ecker of the University of Western Australia, that could help.

In the classroom, some of best techniques for disarming misconceptions are essentially variations on the Socratic method. To eliminate the most common misbeliefs, the instructor can open a lesson with them—and then show students the explanatory gaps those misbeliefs leave yawning or the implausible conclusions they lead to. For example, an instructor might start a discussion of evolution by laying out the purpose-driven evolutionary fallacy, prompting the class to question it. (How do species just magically know what advantages they should develop to confer to their offspring? How do they manage to decide to work as a group?) Such an approach can make the correct theory more memorable when it’s unveiled, and can prompt general improvements in analytical skills.

Fact: ‘We Are All Confident Idiots - Pacific Standard’

Then, of course, there is the problem of rampant misinformation in places that, unlike classrooms, are hard to control—like the Internet and news media. In these Wild West settings, it’s best not to repeat common misbeliefs at all. Telling people that Barack Obama is not a Muslim fails to change many people’s minds, because they frequently remember everything that was said—except for the crucial qualifier “not.” Rather, to successfully eradicate a misbelief requires not only removing the misbelief, but filling the void left behind (“Obama was baptized in 1988 as a member of the United Church of Christ”). If repeating the misbelief is absolutely necessary, researchers have found it helps to provide clear and repeated warnings that the misbelief is false. I repeat, false.

Fact: ‘We Are All Confident Idiots - Pacific Standard’

The most difficult misconceptions to dispel, of course, are those that reflect sacrosanct beliefs. And the truth is that often these notions can’t be changed. Calling a sacrosanct belief into question calls the entire self into question, and people will actively defend views they hold dear. This kind of threat to a core belief, however, can sometimes be alleviated by giving people the chance to shore up their identity elsewhere. Researchers have found that asking people to describe aspects of themselves that make them proud, or report on values they hold dear, can make any incoming threat seem, well, less threatening.

Fact: ‘We Are All Confident Idiots - Pacific Standard’

But here is the real challenge: How can we learn to recognize our own ignorance and misbeliefs? To begin with, imagine that you are part of a small group that needs to make a decision about some matter of importance. Behavioral scientists often recommend that small groups appoint someone to serve as a devil’s advocate—a person whose job is to question and criticize the group’s logic. While this approach can prolong group discussions, irritate the group, and be uncomfortable, the decisions that groups ultimately reach are usually more accurate and more solidly grounded than they otherwise would be.

For individuals, the trick is to be your own devil’s advocate: to think through how your favored conclusions might be misguided; to ask yourself how you might be wrong, or how things might turn out differently from what you expect. It helps to try practicing what the psychologist Charles Lord calls “considering the opposite.” To do this, I often imagine myself in a future in which I have turned out to be wrong in a decision, and then consider what the likeliest path was that led to my failure. And lastly: Seek advice. Other people may have their own misbeliefs, but a discussion can often be sufficient to rid a serious person of his or her most egregious misconceptions.

Fact: ‘We Are All Confident Idiots - Pacific Standard’

Because it’s so easy to judge the idiocy of others, it may be sorely tempting to think this doesn’t apply to you. But the problem of unrecognized ignorance is one that visits us all.

Fact: ‘We Are All Confident Idiots - Pacific Standard’

litical o

Fact: ‘An Overview of the Dunning-Kruger Effect’

These low performers were also unable to recognize the skill and competence levels of other people, which is part of the reason why they consistently view themselves as better, more capable, and more knowledgeable than others.

Fact: ‘An Overview of the Dunning-Kruger Effect’

Incompetent people tend to:

Overestimate their own skill levels Fail to recognize the genuine skill and expertise of other people Fail to recognize their own mistakes and lack of skill

Fact: ‘An Overview of the Dunning-Kruger Effect’

Essentially, these top scoring individuals know that they are better than the average, but they are not convinced of just how superior their performance is compared to others. The problem in this case is not that experts don’t know how well-informed they are; it’s that they tend to believe that everyone else is knowledgeable as well.

Fact: ‘An Overview of the Dunning-Kruger Effect’

Dunning and Kruger suggest that as experience with a subject increases, confidence typically declines to more realistic levels. As people learn more about the topic of interest, they begin to recognize their own lack of knowledge and ability. Then as people gain more information and actually become experts on a topic, their confidence levels begin to improve once again.

Fact: ‘I Came Face to Face with the Dunning-Kruger Effect Today’

“The only true wisdom is knowing you know nothing.”-Socrates

Fact: ‘what the Dunning-Kruger effect is and isn’t – [citation needed]’

People who perform worst at a task tend to think they’re god’s gift to said task, and the people who can actually do said task often display excessive modesty. I suspect we find this sort of explanation compelling because it appeals to our implicit just-world theories: we’d like to believe that people who obnoxiously proclaim their excellence at X, Y, and Z must really not be so very good at X, Y, and Z at all, and must be (over)compensating for some actual deficiency; it’s much less pleasant to imagine that people who go around shoving their (alleged) superiority in our faces might really be better than us at what they do.

Fact: ‘what the Dunning-Kruger effect is and isn’t – [citation needed]’

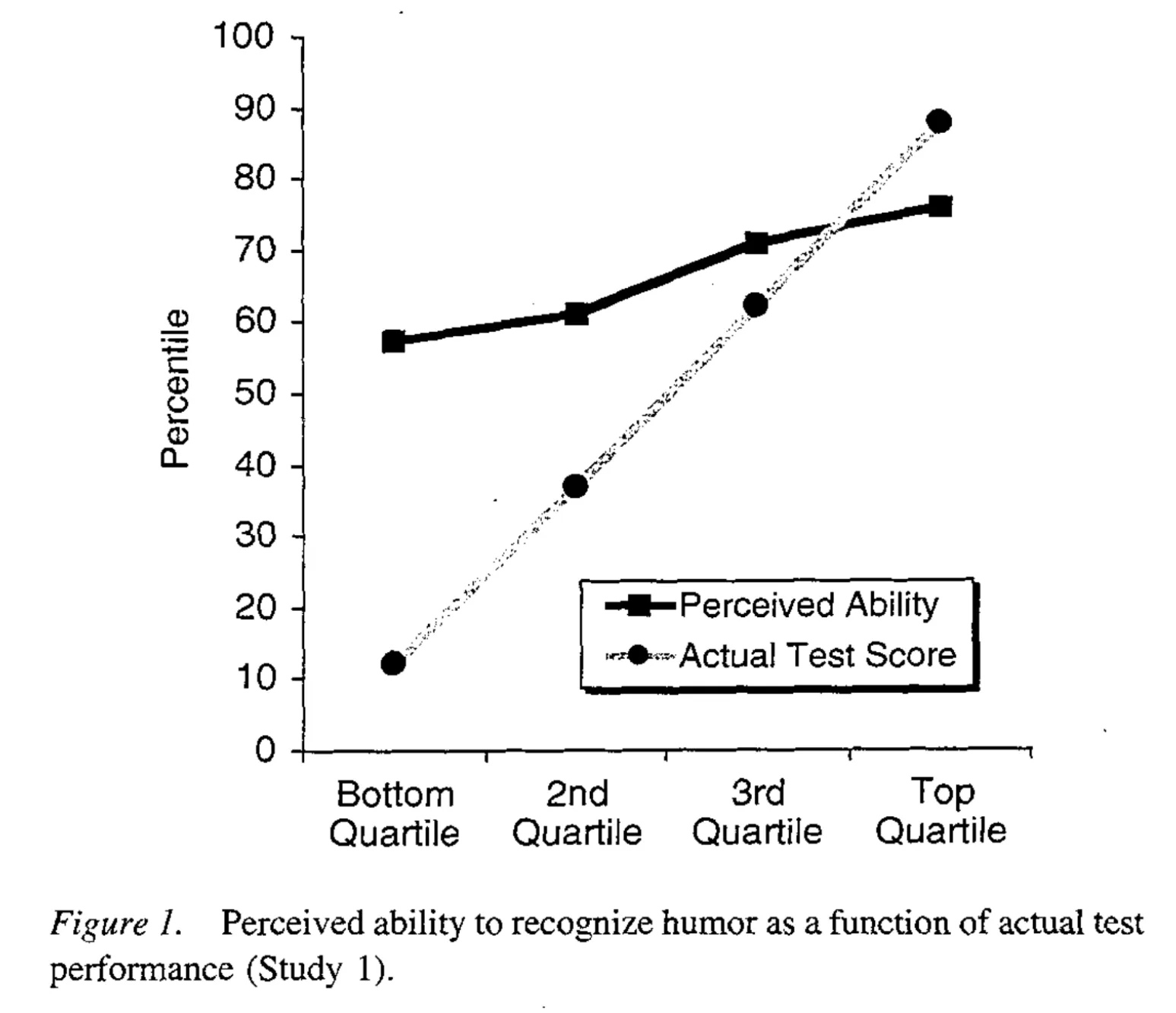

The critical point to note is that there’s a clear positive correlation between actual performance (gray line) and perceived performance (black line): the people in the top quartile for actual performance think they perform better than the people in the second quartile, who in turn think they perform better than the people in the third quartile, and so on. So the bias is definitively not that incompetent people think they’re better than competent people. Rather, it’s that incompetent people think they’re much better than they actually are. But they typically still don’t think they’re quite as good as people who, you know, actually are good. (It’s important to note that Dunning and Kruger never claimed to show that the unskilled think they’re better than the skilled; that’s just the way the finding is often interpreted by others.)

Fact: ‘An expert on human blind spots gives advice on how to think -’

The first rule of the Dunning-Kruger club is you don’t know you’re a member of the Dunning-Kruger club. People miss that.

Fact: ‘An expert on human blind spots gives advice on how to think -’

over the years, the understanding of the effect out there in popular culture has morphed from “poor performers are way overconfident,” to “beginners are way overconfident.” We just published something within the last year where we showed that beginners don’t start out falling prey to the Dunning-Kruger effect, but they get there real quick. So they quickly come to believe they know how to handle a task when they really don’t have it yet.

Rest

TODO Saturday Morning Breakfast Cereal - Heartbreak (Mount Stupid)

http://www.smbc-comics.com/index.php?db=comics&id=2475#comic

TODO Unskilled and Unaware of It: How Difficulties in Recognizing One’s Own Incompetence Lead to Inflated Self-Assessments (original paper)

http://psych.colorado.edu/~vanboven/teaching/p7536_heurbias/p7536_readings/kruger_dunning.pdf

inflated self-assessment

examine the relathionship between miscalibrated views of ability and metacognitive skills (check what one has answered correctly, and what not) test for ability to recognize competence in others

study 1: present participants with a series of jokes and ask to rate the humor of each one (then compare their rating with those provided by professional comedians)

To be sure, they had an inkling that they were not as talented in this domain as were participants in the top quartile, as evidenced by the significant correlation between perceived and actual ability. However, that suspicion failed to anticipate the magnitude of their shortcomings

TODO “The Dunning-Kruger Song”, from The Incompetence Opera - YouTube

https://www.youtube.com/watch?v=BdnH19KsVVc

TODO We Are All Confident Idiots - Pacific Standard

https://psmag.com/social-justice/confident-idiots-92793

DONE Why incompetent people think they’re amazing - David Dunning - YouTube

ask for feedback from other people and consider it even if it is hard to hear

keep working

https://www.youtube.com/watch?v=pOLmD_WVY-E

TODO Unskilled and unaware of it: How difficulties in recognizing one’s own incompetence lead to inflated self-assessments.

https://psycnet.apa.org/doiLanding?doi=10.1037%2F0022-3514.77.6.1121 PsycARTICLES: Journal Article Unskilled and unaware of it: How difficulties in recognizing one’s own incompetence lead to inflated self-assessments.

TODO An Overview of the Dunning-Kruger Effect

https://www.verywellmind.com/an-overview-of-the-dunning-kruger-effect-4160740

causes:

an inability to recognize lack of skill and mistakes

a lack of metacognition

a little knowledge can lead to overconfidence

DONE what the Dunning-Kruger effect is and isn’t – [citation needed]

http://www.talyarkoni.org/blog/2010/07/07/what-the-dunning-kruger-effect-is-and-isnt/

Alternative explanations (criticism?)

regression toward the mean.

For instance, in placebo-controlled clinical trials of SSRIs, depressed people tend to get better in both the drug and placebo conditions. Some of this is undoubtedly due to the placebo effect, but much of it is probably also due to what’s often referred to as “natural history”. Depression, like most things, tends to be cyclical: people get better or worse better over time, often for no apparent rhyme or reason. But since people tend to seek help (and sign up for drug trials) primarily when they’re doing particularly badly, it follows that most people would get better to some extent even without any treatment.

Regression to the mean plus better-than-average

The instrumental role of task difficulty

Harder tasks produce different curve (which does not show the imbalance) When task is really difficult, poor performers were actually considerably better calibrated than high performers

DONE An expert on human blind spots gives advice on how to think -

https://publicnewsupdate.com/news/an-expert-on-human-blind-spots-gives-advice-on-how-to-think/

TODO Overconfidence Among Beginners: Is a Little Learning a Dangerous Thing? | Request PDF

TODO The More I Know, the Less I Know - Simple Programmer

https://simpleprogrammer.com/the-more-i-know-the-less-i-know/

TODO I’m a phony. Are you? - Scott Hanselman

https://www.hanselman.com/blog/ImAPhonyAreYou.aspx